What skills and knowledge are developers lacking?

How do developers view their current skill sets?

How do we help developers add to their toolbox of skills?

How can we promote better software engineering practices?

At a time when qualified development talent is at a premium, any organization with a development team must be asking these or similar questions. Identifying and executing initiatives that grow their existing teams will improve efficiencies and the bottom line while also reducing turnover.

For at least the past year, the software engineering leadership here at Don’t Panic Labs has been interested in learning more about software developer maturity across various levels of experience. Musings on this topic were even the theme of a keynote I gave at Nebraska.Code() in 2021.

Recently, we decided to go straight to the source to begin finding answers to these and other questions: developer conferences. We provided ping pong balls to participants to identify their strengths. Each ball color represented a range (in years) of experience; each tube represented a different strength:

- Identifying ambiguity in the requirements I am given

- Using models and diagrams to help reason about a system

- Accurately estimating work to be done

- Designing API and method interfaces

- Testing my code before it gets to QA

- Finding resources to expand my software engineering literacy

What follows are our findings for each strength, the reasons behind each question, and some key questions that emerged after analyzing the results.

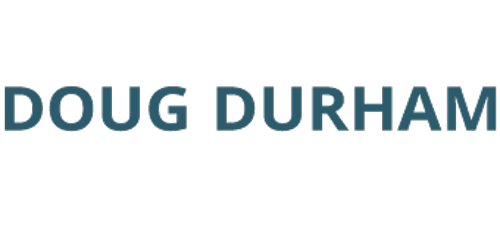

Identifying Ambiguity in the Requirements I am Given

Not surprisingly, as we gain experience, we get better at identifying ambiguity. That said, there are still a surprising number of people in each range that feel this is still a weakness. Likely they are uncovering requirements late in development that they felt should have been apparent earlier.

It is easy to envision many projects starting with an expected end date only to see them come under pressure when additional requirement clarifications are identified during development. This likely causes the team to strain to meet the original deadlines and/or negotiate changes in project scope late in the development cycle.

Despite these concerns, you will see that this skill had the highest percentage of people responding as a strength of all the strengths we tested.

Key questions emerging from these results:

- How effective are the requirement generation processes in place?

- What activities (if any) are teams using to identify requirements ambiguity and hidden assumptions?

- How aware are the teams of modern techniques for analyzing requirements?

- How might the trust increase between the development team and stakeholders if this became more of a strength?

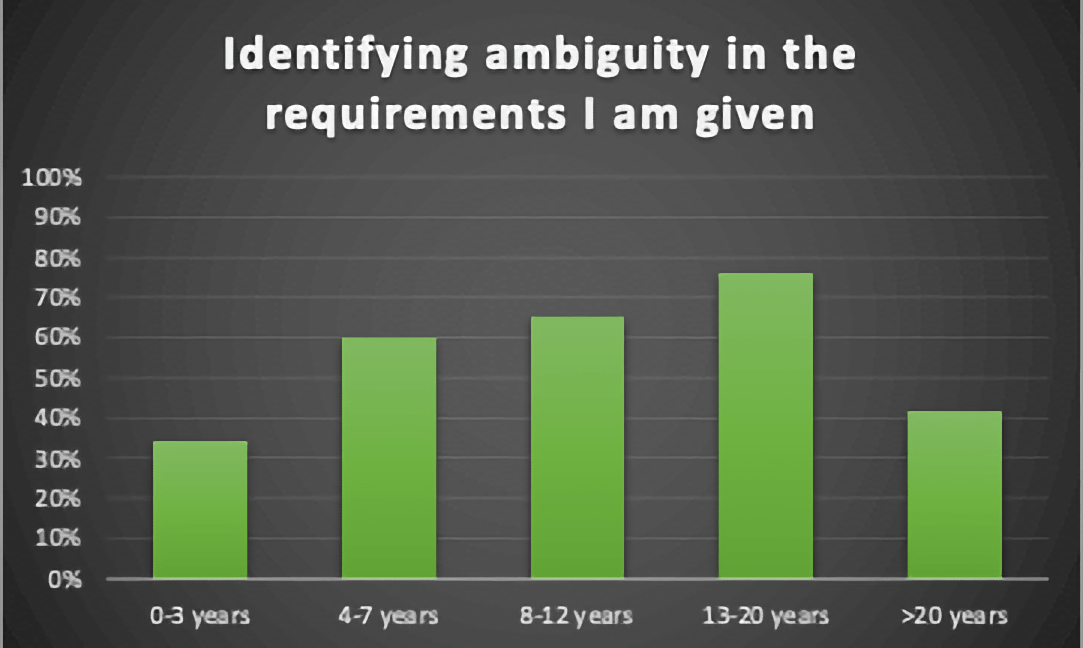

Using Models and Diagrams to Help Reason About a System

Software developers spend more time reading code than writing code. This analysis and reasoning of existing code is necessary when making changes or adding features to ensure that the software works as intended and that no unintended behavior changes are added.

According to our survey, almost no one feels existing tools for modeling software systems are accessible to them. As a result, developers are likely left with only one option for understanding the design and behavior of a system… poring over the code. This is akin to asking someone to understand and analyze the design and details of a house without the benefit of a blueprint.

People with skills using these types of tools have usually spent a fair amount of time reading and researching software design and architecture. I suspect the percentage of developers who actually do this is small, which is why I think these results are low across the board. Unfortunately, the curriculums of our formal education programs are tragically light on software engineering, in general, and design, in particular.

Key questions emerging from these results:

- What kind of velocity improvement might we see with better (i.e., non-code) ways of expressing the structure and behavior of our systems?

- What can be done to improve the software design and modeling literacy of software developers?

- How might using these tools increase the mobility of resources within an organization as it becomes easier to understand systems they are unfamiliar with?

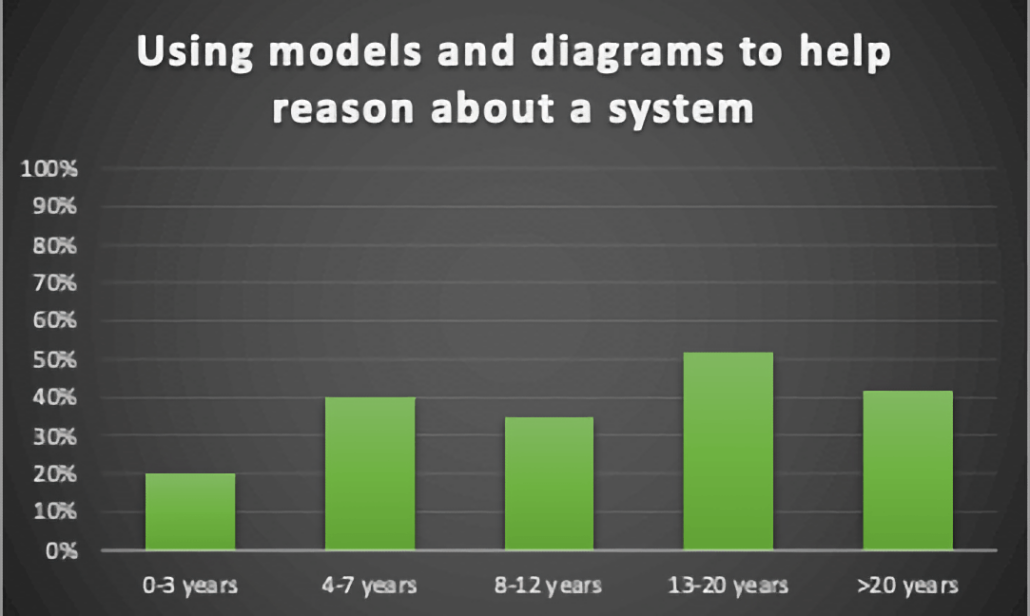

Designing API and Method Interfaces

The quality of API and method signature design directly impacts the level and quality of the coupling within a system. Improper decomposition and poor API/method design ultimately lead to a loss of design stamina and the “big balls of mud” we are all familiar with. Unfortunately, most people in a position to make these types of design decisions do not feel like this is an area of strength.

It’s never one decision that causes systems to decay; rather, it is the summation of a number of small decisions that causes software entropy. As mentioned earlier, there needs to be more emphasis on design in our education programs. Additionally, most people in this career field are unfamiliar with concepts like information hiding and do not have the skills and experience to recognize the impact of their decisions and properly evaluate tradeoffs. Combine all of this with the lack of using unit tests to improve our designs. The end result is inevitable… a big ball of mud.

Key questions emerging from these results:

- How much scrutiny is given to these design choices during code reviews and pull requests?

- How involved are the most senior people in the organization in reviewing and approving API and method signature decisions that the lesser-skilled developers are making?

- What might be the impact on agility and design stamina if this became an area of strength?

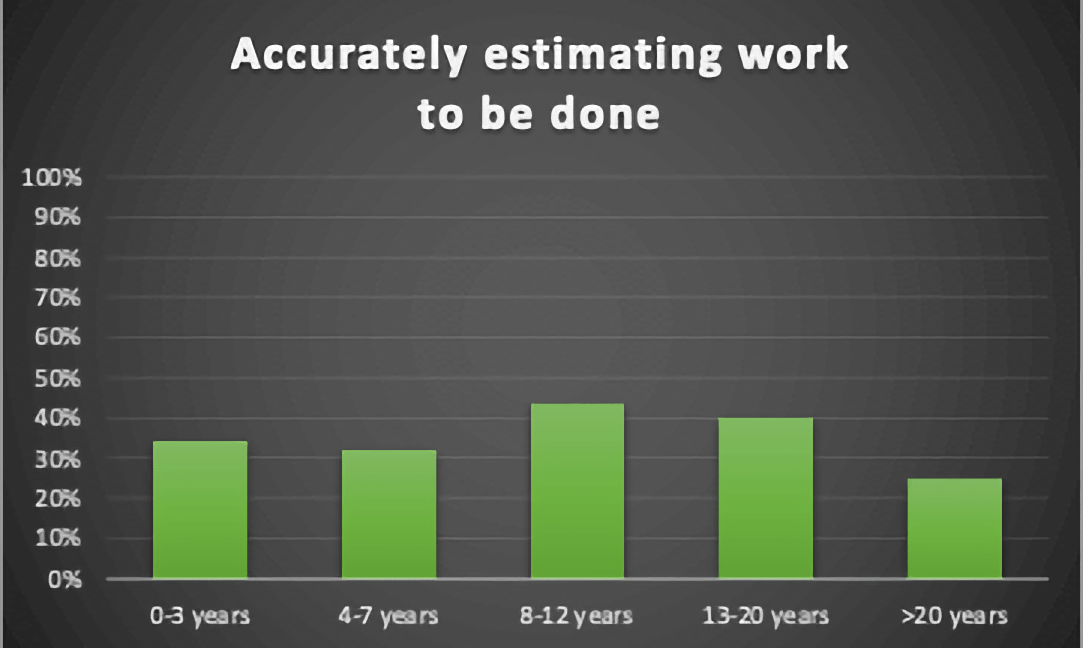

Accurately Estimating Work to be Done

Completing sprints and hitting business-driven deadlines is critical to both the business’s success and the trust stakeholders have in their software development teams. If less than half of developers, regardless of experience, lack confidence in their ability to accurately estimate their work, there will be a number of downstream negative impacts that will affect developer satisfaction and stakeholder confidence.

Poor estimation confidence can be a “canary in the coal mine” indicating other challenges and risks such as a lack of a shared understanding, ambiguity/uncertainty with the requirements, lack of understanding of the existing system, and lack of competency in new technologies.

Key questions emerging from these results:

- How has the inability to accurately estimate work impacted the relationship between the development teams and stakeholders?

- How are teams currently leveraging estimation within their process?

- What differentiates good estimators from poor estimators?

- What tools and techniques might teams leverage to improve this skill?

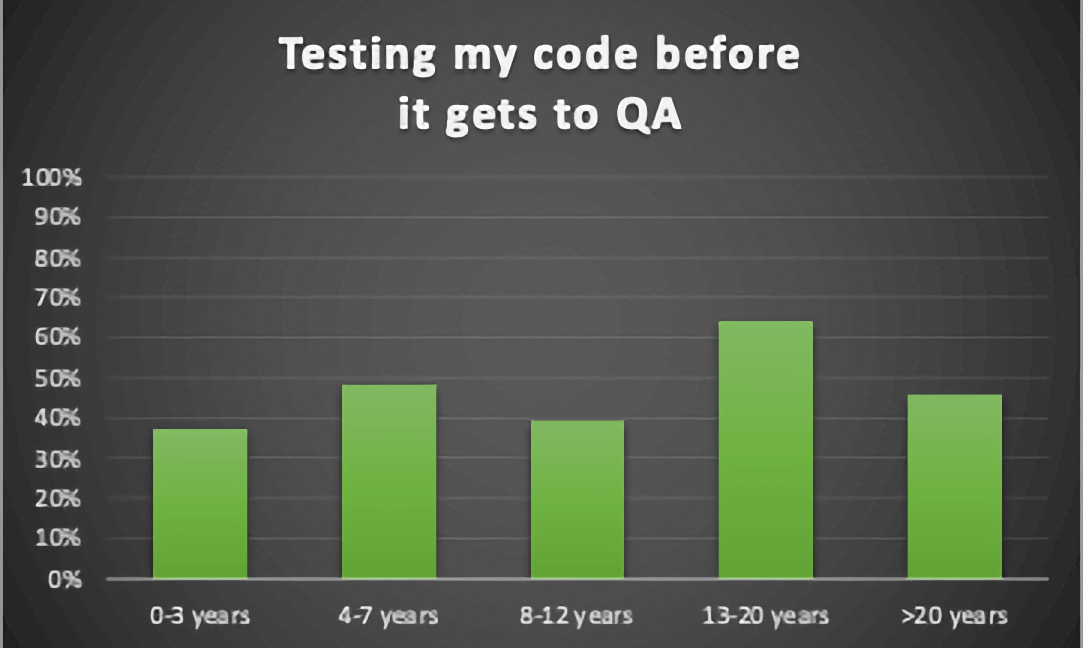

Testing My Code Before It Gets to QA

On the one hand, the results seem to indicate that we are getting better at our ability and willingness to test our code before we release it to others. On the other hand, it still seems unacceptable that a skill so fundamental to the quality outcome of a project is perceived as a strength for less than 50% of the people whose job is to design and develop software.

We are adding a lot of risk and potential rework to our systems if our dev teams do not feel confident in their approach or ability to test their software. This is likely a design problem (can’t test) and/or a process problem (not sure what tests they need to feel confident).

Key questions emerging from these results:

- Given how much is known about designing testable software and effective tests, what is the core reason developers lack confidence in their ability to test their code?

- How much rework is avoidable if we can improve this one skill set?

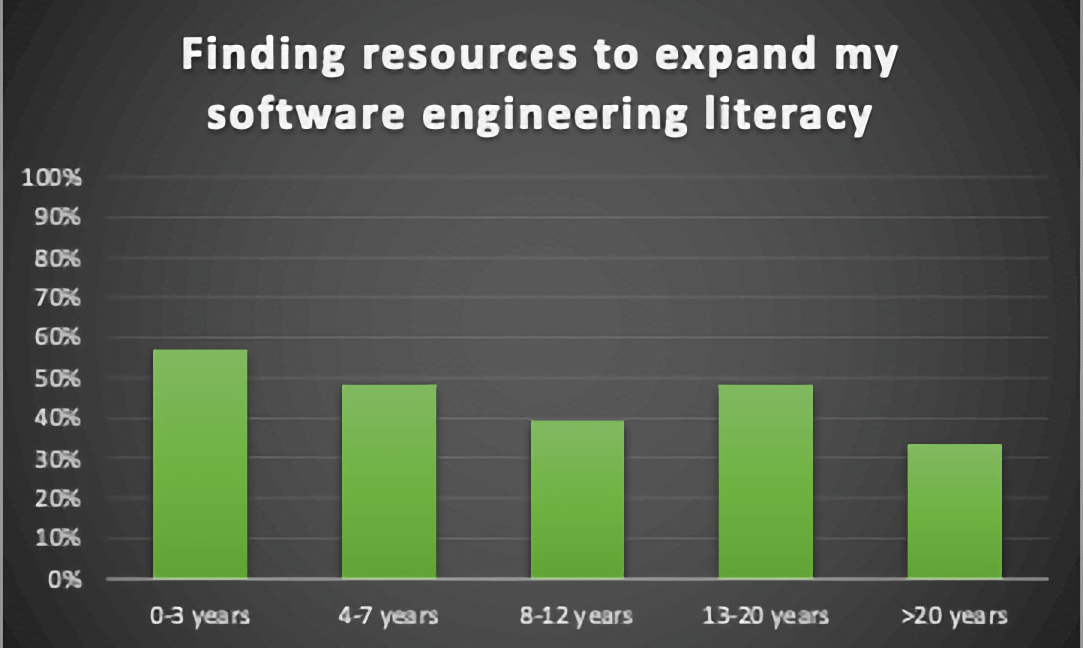

Finding Resources to Expand My Software Engineering Literacy

As people move away from simply needing to write code, they are finding it more and more difficult to grow in the other areas of Software Engineering. Clearly, we need some targeted professional development to fill this need.

Looking at this chart reminds me of an experience I had in college. As an undergraduate electrical engineering student, I once had a professor state that his goal was not that we memorize all of the content in our courses but that we know where to find the answers. An enormous body of knowledge exists for software engineering, yet most developers are not sure where or how to gain access to it.

Key questions emerging from these results:

- What tools could we make available to make the software engineering body of knowledge more visible and accessible?

- How might we enable software developers to assess their familiarity with this body of knowledge and their level of software engineering literacy?

General Observations

In addition to the skill-specific observations above, we were able to make a few general observations about these results.

- Our people lack the confidence and skills in key software engineering activities and feel they don’t have access to the resources necessary to improve their skills.

- Some folks seem to be gaining confidence and skill on the job with a significant jump in the first few years, but then it tapers off.

- Many super-experienced people (>20 years) feel their skills have diminished, likely due to taking on more management roles.

Addressing this first bullet is something we at Don’t Panic Labs have been thinking about for the last 4-5 years. We have even developed some content and educational programs to better understand how to effectively address these needs and scale a solution.

Starting in 2023, we will be piloting a new approach to professional development education for software developers that is aligned with the software engineering body of knowledge. We will be talking more about this pilot in the future. If you want to learn more and/or are interested in possibly being a part of the pilot, please reach out to us at [email protected].

This post was originally published on the Don’t Panic Labs blog.